| KEYNOTE ADDRESS |

Human Hearing teaches the Algorithm – Can we extract something meaningful from audio?

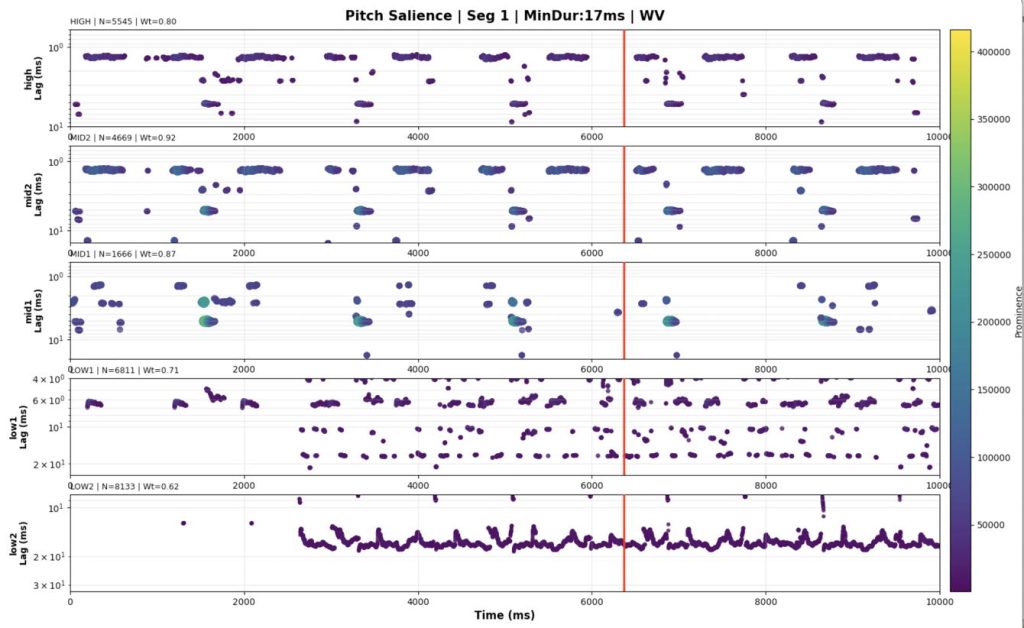

In the past decades, research into human hearing has discovered groundbreaking insights into the biomechanics and the neural processing of the inner ear, the cochlea. Based on this new understanding of pitch and timbre perception, machine-learning models became very precise in classifying melodies, separating sound sources, and identifying speech and musical timbres. My research is focused on the problem of beat and rhythmic pattern detection with the aim of identifying and extracting tempo, meter, and musical rhythms from audio sources. I can demonstrate my solution and want to explore how these models can be combined to inform a modern understanding of music perception. For this purpose, I have teamed up with Dr. Andre Rupp from the University of Heidelberg, who leads the section of biomagnetism in the department of neurology. He is also an expert in auditory image models. We are currently exploring a very interesting piece of electronic dance music by Aphex Twin to see how our models can compare with real human brain activity measured by fMRI scans. Our aim is to gain new insights into music cognition and embodied responses to music.

A pitch-o-gram of the electronic music composition “Tha” from Ambient Works 85–92 (1992) by Richard James, aka Aphex Twin (Boenn and Rupp, 2025)

| PARTICIPANTS |

Group no. 1

From Scene to Score: Applying Film Scoring Techniques to Character and Narrative

My research creation project focuses on composing and producing a film score using techniques developed throughout the year. I am scoring a short film by Master’s student Chelsea White that features an AI character interacting with a human character portrayed by Chelsea herself. My compositional focus is genre, narrative support, and character development. Using traditional film scoring approaches, I aim to create atmosphere and emotional clarity that supports the story. AI will be used as a tool to help shape a thematic identity for the AI character, while all creative decisions remain grounded in conventional scoring methods. The outcome of this project will be a completed film score that applies professional composition techniques to support both narrative progression and character development. In films like Interstellar, Pirates of the Caribbean, and Jaws, music plays a crucial role in shaping how audiences connect with characters and experience the story. By exploring similar approaches, my project contributes to a broader understanding of how film scoring can be used as a tool to strengthen character-driven storytelling, offering insight for emerging composers and filmmakers looking to create more emotionally engaging work.

Re-framing the Meaning Within Hip-hop Sampling

In my research project, my goal is to showcase the variety of ways sampling can be creatively used by composing three original songs that incorporate elements from one existing work. Inspired by research from authors Jeremy Tatar and Amanda Sewell, I will examine different sampling techniques hip-hop producers use and the effects they have in illuminating the producers’ reinterpretations of the source material. Although the general attitude surrounding the art of sampling has gradually improved over time, many music scholars and general audiences continue to reject the notion that it is a transformative and creative tool that expresses a new perspective on an existing work. My hope is that my research project will assist in dismantling this sentiment and in further validating the art of sampling, as well as those who practice it, in the musical sphere.

From Yellow Brick Roads to Hidden Codes: Creating a Queer Musical and Cultural Archive of Songs That Reference The Wizard of Oz

This presentation explores how The Wizard of Oz functions as a queer cultural touchstone across generations, turn the film into an ever-evolving archive of identity, resilience, and belonging. Drawing on Matthew Jones’ concept of music as an “affective archive,” I argue that artists that reference The Wizard of Oz connect their queer experiences to deeper queer history and utilise the film within their specific historical context. Looking at examples across generations, from Elton John’s Goodbye Yellow Brick Road to The Beaches’ Lesbian of the Year, we see the lasting impact this film has had on queer culture. Tracing these musical references chronologically shows how Oz imagery has become a shared symbolic language through which queer artists express longing, repression, pride, grief, and self-acceptance. Ultimately, these songs collectively transform The Wizard of Oz into a living archive where new queer voices can connect, and build community while honouring those who came before.

Group no. 2

Constructive Interference: Overtones in Barbershop Quartet Music

The human voice is an instrument with incredible complexity. Through the entirety of our world’s population, voices are one of the things we use to differentiate people. I aim to display mastery over some of these complexities by creating a vocal arrangement and recording that is perfectly tailored to the creation of powerful overtones. I will produce these unique tones through the analysis of vocal harmony. I will be analysing harmonies sung at different intervals and with different vowels, to determine which conditions are optimal to generate powerful overtones through summation. Similarly to how we dissect the voice for language, I aim to dissect the voice for its effects in harmony. I will use spectral analysis to pull apart and examine elements of vocal harmony to understand how they affect the tone of music. This could help turn popular understanding of vocal harmony towards a more refined and informed perspective. Modern barbershop quartets, including 2025 world champion quartet Lemon Squeezy, tune their harmonic intervals using overtones.

The Role of Pscychoacoustics: Auditory Illusions as Tinnitus Therapy

As musicians and audio engineers, our hearing is everything. Protecting it is difficult due to exposure to loud environments. Our hearing can undergo permanent deterioration from birth or exposure to loud sounds. A permanent ringing can occur as a result of the brain compensating for inner ear damage. Scientists gave the name: Tinnitus; a ringing only that person can hear. It occurred to me that it bears resemblance to phantom tones produced by auditory illusions, illusions that can trick our brains to hear a sound only we hear. It can be quite hard to grasp, but I invite you to follow my research on these interactions in hopes of seeing Auditory Illusions as a non-invasive solution for Tinnitus therapy. Similar psychoacoustic research by Townsend and Konkle, found that a minimum total harmonic distortion on hearing aids is required for speech perception. While one may think it would be better to have 1% or lower to clean the signal, the psychoacoustics say otherwise. You’ll see me explore these ideas more thoroughly in my presentation.

Exploring Physical Modelling Synthesis as a Compositional Tool in Electronic Dance Music

Physical modelling is a revolutionary synthesis technique that has the potential to change the degree of musical expression, realism, and detail that can be generated from digital sound design. Through the Reaktor libraries, PRISM and Steampipe 2, I will design presets that effectively demonstrate the benefits of Digital Waveguide and Modal synthesis, and investigate how each sound design technique invokes emotional qualities that can improve the perception of Electronic Music. A comparison between physical modelling and traditional wavetable synthesis techniques with be evaluated in real-time within a live electronic composition, to demonstrate the perceived benefits and drawbacks of each. This project ultimately explores Physical Modelling Synthesis as a compositional tool, and how it can give electronic composers a palette for extended expression in their pieces in ways independent from the compositional material.

Group no. 3

Time Moves On

Our project aims to explore the relationship between adults in our modern society and human being’s attraction towards nostalgia. Through various visual and audio experiences, we will push the audience towards experiencing the feeling of nostalgia. We will use sound fonts, soundbites, and other various nostalgic audio with an aim to trigger a deep emotional nostalgic response. We will also use physical childhood objects and video elements to craft a visual addition to this emotional initiator. Our piece will play in a dark room accompanied by a video, props on stage, and lighting effects. Through the use of sensory effects, each member of our audience should leave the space with a unique interpretation of the performance. Through different reactions to the work, we will craft a personal yet interconnected experience between listeners. The featured piece is an emotional audiovisual rollercoaster through nostalgic soundscapes and painful memories.

QEII

QEII is a camcorder piece that explores liminality of time and space within the realistic confines of Canadian road trip experiences. Using the compositional practices from Boards of Canada with elements of chance presented through early electronic gear, the result is a visual and acousmatic experience that embraces low-fidelity audio for it’s aesthetic realisation of driving. It is both compositional and journalistic for an immersion quality to the liminality presented through the noises along the highway.

Distorted Dissonance

This work mines the territory of distortion, noise, and the outer limits of electric guitar sound as a musical material in its own right. I begin with clean DI recordings of guitar gestures – low drones, dive-bomb squeals, pick scrapes, harmonic swells – then re-amp them digitally in Pro Tools, layering, re-contouring and reshaping them in the box. These materials are compressed into a mass and then split apart using automation, motion in space, and contrasting dynamics. The overall structure follows a trajectory of emergence, chaos, collapse, and convergence. The final movement builds to a unison tempo across the stereo field before ending with a return to silence. I encourage the listener to attend to the materiality of the distortion, the movement of the sound mass and the blurred line between noise and intent.

Dissonant Echoes of Yesterday

This composition is an audio-visual experience intended to take the listener through a journey of sadness as well as longing for past experiences as those were better times in their life. The visual aspect of the piece features a looping highly visually distorted video of different clips online that depict memories and longing for past experiences. The audio portion of this piece is mainly centred on a somewhat distorted but pleasant sound with the arrangement of a bunch of synths that follow a pretty ambient progression that doesn’t necessarily incorporate a lot of movement. As the piece progresses we see instances of more synths that help build up the progression with the addition of drums to help the audience feel as immersed as possible to the experience. I intended to go for less variation when it came to notation as I wanted the listener to experience more of a feeling when listening as an opportunity to think back about memories in their own lives. Additionally, I thought it was essential to include a visual aspect to this piece because of how much I believe a visual aspect adds new meaning as well as more immersion to the viewers perspective which can invoke more thoughts out of them than otherwise if a visual aspect wasn’t included.

909 Jammed

909 Jammed explores the malleability of digital audio by corrupting a set of techno drum samples in real time using code. This piece is inspired by William Basinski’s exploration of physical degradation in artistic practice. While the drum machine plays, the samples begin to fall apart, quickly giving way to rhythmic noise with a glitchy, harsh timbre. I chose to use TR-909 drum machine samples for this project because of their ubiquity in techno music. I’m using the open-source audio programming language PureData to modify these samples. This musical piece unfolds over 5 minutes. The built-in synthesizer (designed by Simon Hutchinson) abuses the mechanics of the sample-and-hold object to create a sound that groans, grinds, and pops with colour in an otherwise dark piece. A behind-the-scenes visual element projected onto the wall of the performance space shows the code at work in real time. The waveforms dance and shuffle, slowly coming undone as the piece plays on. As the samples disintegrate, the groove remains intact, allowing noise and techno music to integrate into one another. The final result is a dusty, hard-nosed techno piece composed of samples that are turned to noise in the process of performing.

Fractured Resonance

Investigates how noise creates emotional responses and how glitch aesthetics and sound fragmentation affect listeners. Through the processes of piano recordings to create abstract textures by using granular synthesis and random sequencing techniques. The acousmatic composition Fractured Resonance presents a sonic exploration of beauty through disruptive sound transformations.

GHOSTS and Other Works

Gibson puts his sampling and digital audio skills on display in an experimental breakbeat piece. This project represents the way in which old ideas can “haunt” the present. These ideas can be rearranged in many different styles and patterns, but we are still unable to move past them. Ultimately, I attempt to break away from the constraints of the past into something truly novel. The project takes the Amen break—one of the most widely used samples that has persisted for decades—and chops it into many alternating patterns through a generative digital modular synth patch. Over the course of the piece, I will add an increasing amount of digital effects to the mix, eventually transforming the original loop into an unrecognisable wall of sound. Gibson also presents other smaller projects in ambient and experimental sound design, with intricate ethereal soundscapes dominating throughout.

Bio-Industrialism

This project explores the dichotomy between nature and technology. The piece is composed of various recordings of electronic devices like a Vacuum cleaner, which are heavily processed, tape loops of nature recordings, and a droning hum. The piece takes the form of a finite song that fluctuates between the natural and the technological. The audience should focus on the various sounds, distorted beyond all reason, to see if they can pick out what the sound originally was.